Fighting Deepfakes, Coalition Targets Authenticating Media Provenance

As misinformation and deepfakes race across the internet, news organizations are working to help their audiences verify the authenticity of digital media.

The world’s first open source standard for certifying digital provenance published last month, and the code — which is more sophisticated than mere watermarking — is being added to programs like Photoshop. With the standard, the authenticity and provenance of digital media changes can be tracked during every step of a news organization’s workflow.

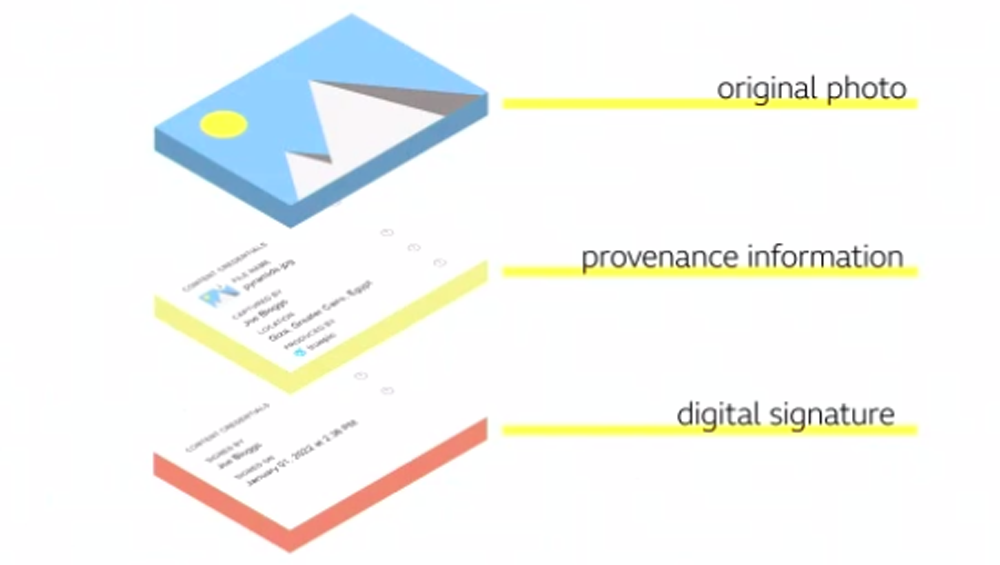

The Coalition for Content Provenance and Authenticity (C2PA) authenticity certification binds provenance information to a piece of digital media.

During an executive update from the Coalition for Content Provenance and Authenticity (C2PA) on Tuesday, speakers noted some privacy concerns are being considered due to the metadata associated with digital media. Over the next couple of years, the hope is that a common technical standard for media provenance will be more widely embraced.

“We live in this dangerous landscape of disinformation,” said Jatin Aythora, head of R&D at BBC, a Project Origin member. As such, he said, it’s important for audiences to be able to verify the authenticity of a piece of digital media.

The C2PA standard delivered in January arose from a unified effort between the Adobe-led Content Authenticity Initiative (CAI) focused on providing a history of digital media and Project Origin, a Microsoft- and BBC-led initiative focused on tackling digital news disinformation.

The authenticity certification works by binding provenance information to a piece of digital media through its entire journey. Every edit that is made is recorded and signed and bound to that media in the form of a tamper-evident record. Content credentials allow consumers to view all changes made to the media and confirm the media hasn’t been tampered with.

In the case of a digital picture taken of the Brooklyn Bridge with a camera with Truepic software that includes the C2PA standard, the provenance information will be immediately sealed to the photo with a tamper-evident cryptographic signature. If that photo is sent to an editor at The New York Times, the editor could inspect the manifest for authenticity before opening it in Photoshop preparatory to publication. All edits made in Photoshop would be added and locked into the photo’s provenance chain, giving the viewer a complete picture of how the content came to be.

“The technology is made possible by improvements in public key cryptography and secure distributed ledgers,” said Eric Horvitz, chief scientific officer at Microsoft, which is one of the Project Origin members. “It works by immutably embedding info about the content and its source and history as metadata that travels along with the digital media.”

Andy Parsons, senior director of the Adobe-led Content Authenticity Initiative, said Adobe’s Photoshop makes it possible to make substantial edits to images well beyond what can be done with programs like SnapChat.

“Photoshop, of course, [is] synonymous with manipulating photos, good or bad,” he said.

Adobe has been focused on the creator ecosystem and proving that sealing provenance information into a digital file can be done without impeding workflow, he said.

“Nothing speaks louder than working code. It’s one thing to write a standard, but it’s more important to see this in production,” Parsons said.

The standard is in prototype with Photoshop, he said, and the expectation is to add it to other programs like Lightroom.

Marc Lavallee, head of R&D at The New York Times, a Project Origin member, said it will likely be a multi-year journey to build provenance tracking into workflows.

“We need to make sure the needs of our industry are baked into every step of the process,” he said. “Developing best practices requires practice.”

It’s crucial, added IPTC Managing Director Brendan Quinn, that software vendors aim for true interoperability, down to “which metadata fields should be where.”

Vendor support will make it possible for small newsrooms and agencies to “just flip a switch so their content will be C2PA right out of the box,” Quinn said.

Horvitz noted there is the potential for the provenance information to present unforeseen problems and potentially negatively affect users.

Even “best-intentioned” technologies have been used in “creative and malevolent ways,” he said, adding that the group is considering these concerns.

Parsons said no sophisticated specification is “straightforward to implement.”

He said he expects most potential implementers of the technology will skim the specifications, read the guidance document regarding potential threats and harms and then look for open source code to fold into their existing tools.

Using open source code “takes care of the heavy lifting” and helps with interoperability to make lives easier, he said.

“The journey forward is somewhere between very simple and very difficult,” Parsons said.

Comments (0)