TVN Tech | Pandemic Tees Up AI’s Role In Archives

With so much live programming sidelined by the pandemic, artificial intelligence may finally matriculate from buzzword to viable tool in terms of helping broadcasters find replacement content from their archives.

The absence of sports and other live programming offerings has left many broadcasters scrambling to fill the gaps, leading some to turn to their archives for content that can be repurposed into specials or other substitute programs. The problem is, many find those archives to be the equivalent of a massive and very messy closet, and the further a clip goes back, the less likely it is to have sufficient metadata to put it within retrievable reach.

“In the old days, you didn’t store metadata with your files because everything was on tape,” says Debra Slater, director, Three Media. “People back then didn’t realize how comprehensive metadata would be in the future, and without the metadata, you can’t go find your content.”

Enter AI’s first potential application facing an archive in disarray.

“Many of the archives are hard to search because people don’t even know what is in them,” says Eric Bassier, senior director of product marketing at Quantum Corp. “How companies are employing AI now is they are starting to use indexing algorithms that have been developed in the cloud to basically go and index their archives. Step number one is to index the archives, make them a little more online and more searchable.”

From older archives in which little to no metadata exists, AI can wend through and offer a couple of key organizational functions. The first is transcribing speech to text of the commentary within a given clip, creating a structured data set that can then be searched against.

The second focuses on video. “You take your feeds and put them through the video AI and [it] will … recognize places, objects, colors, logos, graphics,” says Jerome Wauthoz, Tedial VP products. Pivotally, it will also recognize faces.

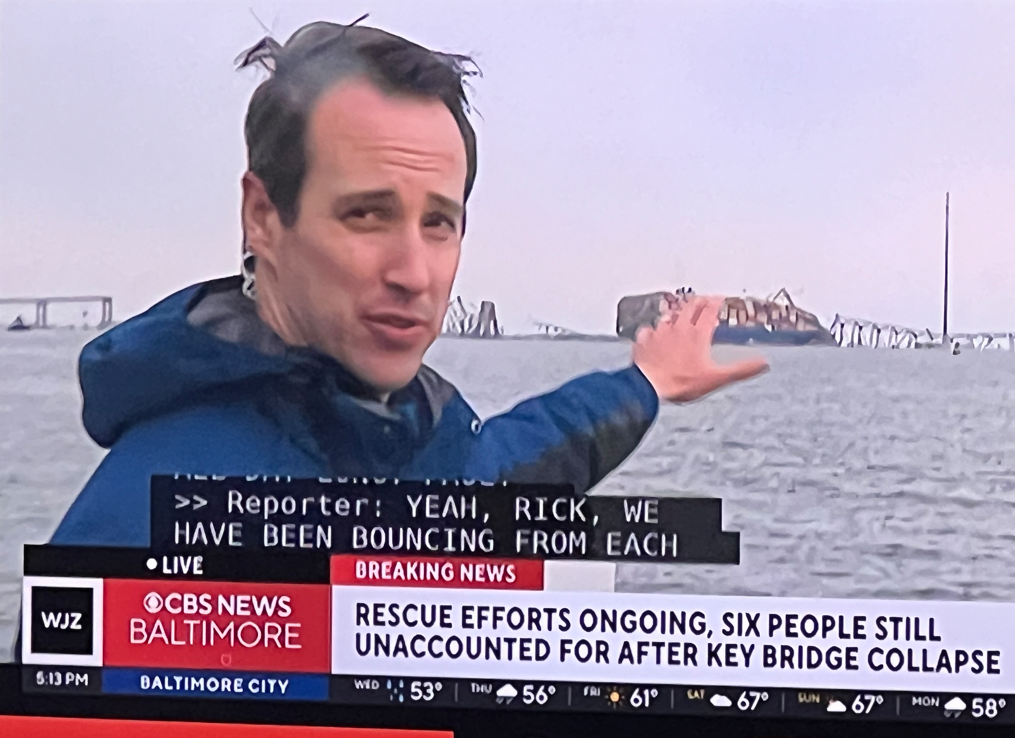

One major upshot of this is a news organization, for example, can suddenly put its hands on loads of assets once buried anonymously in its archives and give them new life in documentary material. Michael Arthur, SVP of licensing at Veritone, has done this kind of work with CBS News and its archives.

CBS might deliver all of its content related to, say, the Son of Sam murders, “and then we will run it through transcription, object recognition and facial recognition,” Arthur says. “We did a segment on David Berkowitz and found a really nice little gem of his doorbell ringer in his apartment complex with the name Berkowitz on it.”

AI makes the retrieval process “a hell of a lot easier,” Arthur says. “The intention of AI is to enhance or accentuate the metadata you may have on a piece of content.”

That’s the plus side AI can offer, accomplishing algorithmically what would otherwise take a Herculean effort in human labor and hours the farther back a poorly tagged archive stretches.

But of course, there are caveats. One of them is that AI can’t perform instantaneous magic on a media asset management system. It has to learn in order to maximize its efficacy and efficiency.

“A computer is only as good as its operator, and artificial intelligence is only as good as its trainer,” says Ben Davenport, portfolio manager for Arvato Systems. “You have got to be able to train the system appropriately with the content you want it to measure. And that is not something that can happen overnight.”

On the transcription front, for instance, accuracy varies based on the quality of the audio, whether the speaker is in a studio or field environment and whether other variables like multiple speakers, crosstalk or regional dialects factor in. Clearer single voices are easiest for AI to learn, but accuracy across the board improves with time and the volume of content that the AI is run against.

Veritone, Arthur says, will put two to three different transcription engines against a file. They will essentially correspond with each other and verify what the other has heard, resulting in ever cleaner speech to text. “The best I have ever seen is probably about 92% accurate, and I’m sure it has gotten better than that,” he says.

The same principle goes for facial or object recognition. “Companies need a big body of data to do that, and it just takes time to train and make the algorithms better and better,” Quantum’s Bassier says.

If training time is one caveat, the complexity and cost of applying AI to archives are the entwined others. This is especially the case for broadcasters coming to the process from a cold start.

“A lot depends on what their infrastructure looks like and what their starting point is,” Bassier says. “If they have an archive that was made on digital tape that is just harder to index.”

Veritone’s Arthur adds: “A lot of folks put [content] in a can, put it in an inexpensive piece of real estate and they shut the door and kind of forget about it. They really haven’t done a tremendous amount of what I would call putting the archive in the gym, getting it in shape and making it as healthy as possible.”

And just like many of the millions of people homebound during the coronavirus and carrying a few extra pounds of flab, getting back into shape is a question of priorities and increments.

“There are baby steps,” Arthur says to those broadcasters sitting on a terabyte or perhaps even a petabyte of content, the thought of managing it likely to send them diving back on the couch with a bag of potato chips.

“What we really want to do is prioritize the content,” he says. “Look at what you want to create, what is some of your most valuable content, the stuff we should get out in front right now. Let’s start there.”

Fair enough, but before most people sign up for a gym, they want to know the cost of membership, and for some companies it’s going to be steeper than for others.

“You are not going to go through all the archives suddenly and put everything through the AI,” Wauthoz says. “It’ll cost you a fortune. We need to be realistic. If you don’t have the data yet, going through the process to just reingest all your content through the AI to get the metadata is going to cost you a lot, but you can still do it on a case-by-case basis.”

Generally, the cost of deploying is by the hour and tends to have a volume discount. One vendor (who didn’t want to be identified because of pricing information sensitivity) says a cost sampling might be $0.05/minute for facial recognition and $0.10/minute for sentiment analysis using video AI. A broadcaster paying for 150 hours per month would be looking at around $3.99/hour, while committing to 3,500 hours a month finds the price dropping to $1.36/hour.

For a low-risk approach, IBM is offering a free 90-day trial of its IBM Watson Knowledge Catalog, says David Wohlford, senior product marketing manager. “Customers can plug in, scan all the data and then realistically start searching for things and creating their own metadata catalog,” he says of the software, which isn’t bespoke for media companies but can be easily adapted to their needs.

Craig Bury, partner and CTO at Three Media, acknowledges that for any broadcaster, now is certainly not an optimal time to think about incurring new technology expenses as ad revenues dwindle amid the pandemic.

“People are going to be reluctant to put any kind of serious capital behind what could be a flight of fancy, but if a provider comes in to offer them a service on an op-ex kind of basis, it becomes far more interesting because the pain is taken away,” he says.

That, coupled with AI’s ability to fill programming holes, may finally move it from the realm of buzzword into an everyday tool.

“We see this as a pretty significant opportunity,” Bury says. “The events that we are living through are forcing a lot of organizations to completely rethink the way they work, and AI will most certainly play a role there.”

Comments (0)