Alphabet and Microsoft help Wall Street clinch its best week in nearly six months on Friday.

Alphabet and Microsoft help Wall Street clinch its best week in nearly six months on Friday.

The entertainment giant would install a committee of top executives to run an "Office of the CEO" on an interim basis; no decision has been made on Bakish’s future.

Comcast said chairman and chief executive Brian Roberts' pay package for 2023 totaled $35.47 million, up from $32 million the year before. It included a $2.5 million base salary, stock awards valued at about $15 million, $9.2 million in option awards and $8.55 million in what’s called non-equity incentive plan compensation (like a cash bonus).

In a rare development, two local news organizations in Colorado Springs — the Gazette daily newspaper and ABC affiliate KRDO — have ripped each other over the way they are covering something that has been central to the city’s identity for nearly half a century.

CBS’s Lingo revival premiered in mid-January 2023, and was renewed for Season 2 just six weeks later. And yet another few weeks after that, the game show was pulled from CBS’s Wednesday lineup — where it had been sandwiched between Survivor and the freshman drama True Lies — and replaced with drama reruns. But now, there's a Season 2 premiere date — Friday, May 24, at 8/7c, where it will return with back-to-back episodes, followed by weekly installments every Friday at 8.

Both daytime strips have been renewed through the end of the 2025-26 season on stations covering 95% of U.S. TV households. Judge Judy has been a daytime syndication stalwart since 1996 and that hasn’t changed even after she stopped producing new episodes of the series in 2021, after the show logged its 25th year. Judge Judy reruns at present average about 6 million viewers a week and the show still commands top daytime time slots around the country. Hot Bench, which features a panel of judges, is still actively delivering new episodes and is produced for CBS Media Ventures by Sheindlin and the Judge Judy team

The cable giant continues to shed video customers as revenue remained unchanged at $13.67 billion, in line with analyst expectations.

CBS Sports has launched Champions League, a 24-hour streaming channel with nonstop goals and highlights from the flagship UEFA Champions League soccer club games out of Europe. The FAST channel featuring current season and historic game recaps will debut for free on Pluto TV and on connected TV sets through the CBS Sports app.

Even though Ellen DeGeneres is looking back at her talk show with a sense of humor, she can't deny that her unceremonious exit left deep wounds.

Poppy Harlow, who has been with CNN since 2008, is exiting the network. Harlow announced her decision in a memo to colleagues. She was offered a new role following the cancellation of CNN This Morning, but instead decided to leave, the network confirmed.

Scott R. Flick: "On Tuesday, the Federal Trade Commission announced a new rule banning employee noncompete agreements, treating them as harmful and an 'unfair method of competition.' This includes noncompetes in the broadcast industry, where they serve a vital purpose that was given short shrift by the FTC.

Canceled by Disney before it even aired, The Spiderwick Chronicles found a new home at Roku and has so far “delivered results beyond expectations,” its creator says.

The Los Angeles Times newsroom continues to feel the invisible hand of owner Patrick Soon-Shiong in coverage of his pharma research, home page choices and in pushing for livestream video.

With NBC’s #OneChicago in rerun mode one final time this season, CBS’s Survivor dominated Wednesday both in total audience and in the demo (with 4.9 million total viewers and a 0.7 demo rating). ABC's Not Dead Yet (2.2 million/0.2) was steady with its season (series?) finale.

There will not be a fourth season of Canadian comedy Run the Burbs. Co-creator and star Andrew Phung shared the news Thursday on Instagram that CBC has canceled the series after three seasons. The show airs in the U.S. on The CW.

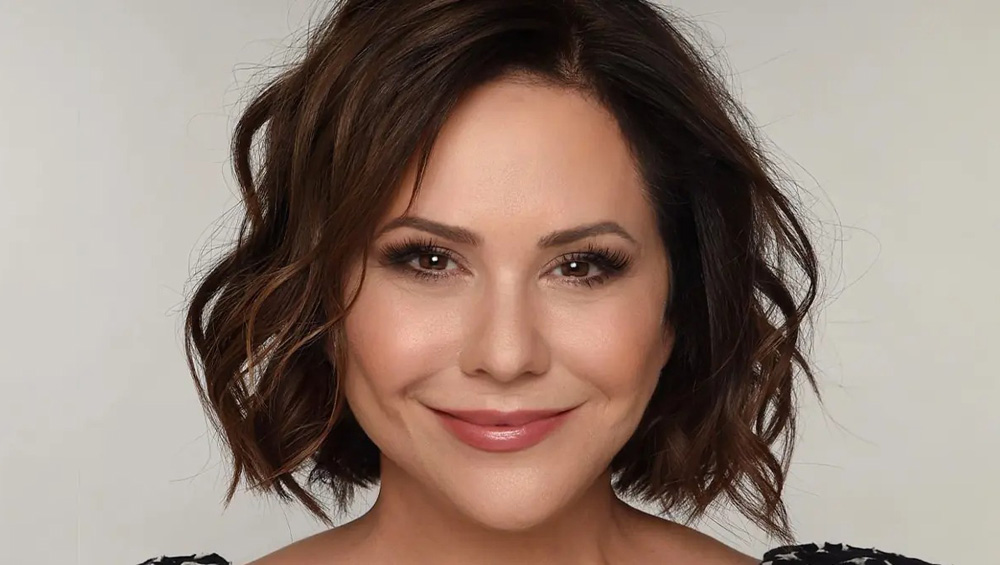

From Glee to The Golden Bachelor, Empire to The Dropout, Arrested Development to Abbott Elementary and 24 to 9-1-1, Shannon Ryan has played a critical role in the launch of countless TV series over the past three decades. Now president of marketing for Disney Entertainment Television, Ryan oversees marketing, publicity and communications for an unprecedented portfolio of more than 200 active series at any given time across Hulu, ABC, National Geographic, Disney Channel, Onyx Collective, Freeform and other platforms.

Netflix is investing in Leanne Morgan. The streamer has handed out a 16-episode, straight-to-series order for an untitled multicamera comedy starring the stand-up comedian. Comedy kingpin Chuck Lorre (Big Bang Theory, Two and a Half Men) co-created the series alongside Morgan and Susan McMartin.

The former CBS Evening News anchor has not appeared on CBS since he left the network in 2006, but will be the subject of a CBS Sunday Morning profile this weekend.

Netflix’s 3 Body Problem delivered its second straight No. 1 showing among streaming titles, leading the rankings for the last week of March. The acquired series top 10 had several new entrants, thanks in part to a change in how Nielsen reports the numbers.

Whistleblowing isn't unique to any industry. Yet the contrary outlook baked into many journalists — which can be a central part of their jobs — and generational changes in how many view activism have combined to make it probable these sort of incidents will continue.

Paramount and Skydance are inching closer towards a potential merger that would see David Ellison’s media company buy out Paramount’s controlling shareholder Shari Redstone. According to individuals with knowledge of the talks, Skydance has agreed as part of a potential merger deal to infuse between $4.5 and $5 billion of fresh capital into Paramount, $2 billion of which would be used to buy Redstone’s shares and to settle the company’s debts. Ellison would become CEO of Paramount, replacing current CEO Bob Bakish, with former NBCUniversal CEO Jeff Shell serving as president.

Across the country, open positions at TV newsrooms stay vacant or draw a drizzle of poorly equipped, unimaginative applicants. Changes at journalism schools and compensation, along with reframing how we think of applicants, could be among things to change that.

The Peabody Awards on Thursday revealed its full list of nominations for its 84th edition, with high-profile TV series like The Bear, Bluey, The Last of Us, Reservation Dogs, Fellow Travelers, Blue Eye Samurai, Last Week Tonight, Jury Duty and Marvel’s Moon Girl and Devil Dinosaur among those making the cut.

Google and YouTube parent Alphabet blew away Wall Street estimates with revenue up 15% to $80.5 billion driven by strong ad growth. The company also announced a major milestone, its first ever dividend of 20 cents a share in June. The stock is up more than 12% after market close. During a call, CEO Sundar Pichai said YouTube TV has more than 8 million subscribers, a stat YouTube CEO Neal Mohan noted in February.

Roku posted solid first-quarter financials, with positive results in several key metrics illustrating the benefits of ongoing price inflation in the streaming sector. Net losses totaled 35 cents a share, far better that Wall Street analysts’ consensus estimate of 62 cents and much narrower than the $1.38 recorded in the year-ago quarter. Revenue of $754.9 million fell significantly short of the Street’s expectation for $848.6 million, but the company notched its third straight quarter of positive Adjusted EBITDA and free cash flow.

Shares of Snapchat parent Snap popped 26% in late trading after the social media network said revenue jumped more than expected last quarter to $1.95 billion, up 21% from 2023. The number, and the outlook for the current quarter were nicely above Wall Street forecasts, as were daily active users, which hit 422 million.

Jobs posted to TVNewsCheck’s Media Job Center include an opening for a director of sales and an account executive, executive producer, meteorologist, weekend anchor, sales manager, senior newscast producer, digital video producer and reporter.

Streaming sales leaders from Gray Television, E.W. Scripps, Hearst Television, Ticker and Megaphone TV will share the latest developments in technology and strategy for OTT and FAST channels. Learn more about this critically important revenue source for broadcasters in a TVNewsCheck Working Lunch Webinar on May 16. Register here.