Scale Complicates Monitoring, But Tools Level Up To The Challenge

With many different ways to deliver video content and viewers skipping to the next channel at the slightest quality issue, broadcasters want high-tech monitoring and troubleshooting solutions to keep their viewers engaged.

Over-the-top (OTT) television, ATSC 3.0 and an avalanche of FAST channels have vastly complicated the quality of service (QOS) and quality of experience (QOE) monitoring landscape. Vendors have expanded their QOS/QOE offerings to include capabilities like more automation, faster troubleshooting, flexibility and decryption for streaming as well as monitoring for Society of Cable and Telecommunications Engineers (SCTE) triggers. And soon it will be easier to anticipate potential QOS/QOE problems.

Mediaproxy CEO Erik Otto says that scale has complicated the world of monitoring. Digital channels, FAST channels and ATSC 3.0 have created a domino effect that has increased the monitoring requirements for all broadcasters.

Mediaproxy’s Monwall hybrid-multiviewer for QOS monitoring.

“Even the ordinary station is now faced with scale at a level that only the much larger media companies or networks were dealing with,” he says. “For them to start to look at monitoring the quality requirements, that the station output is high quality output, the monitoring side has to be up to scratch.”

And broadcasters need to decide whether they want monitoring to be in the cloud, on-premises or hybrid, he says. “Certain tools are better suited to on-prem because of nature of the streams” and the types of errors that are possible, he says. “Some errors only exist on the internet.”

Skyrocketing Complexities

Paul Briscoe, chief architect at TAG Video Systems, says quality monitoring has changed significantly as the number of ways to send signals from the broadcaster to the viewer has increased. In over-the-air television, quality was constant, he says.

“It was a simple world. We could predict accurately the customer’s experience,” he says. “It was broadcast quality.”

Mathieu Planche, Witbe CEO, says complexity has skyrocketed due to the many types of devices receiving content and the need for the highest bit rate.

“There are web streaming apps, ISPs, satellite boxes, smart TVs from 10 different manufacturers, gaming consoles, etc.,” he says. “It’s created a huge amount of complexity.”

Planche called it a dual paradox because broadcasters must test complicated parameters that have never been more important to measure. “It’s a dilemma. They must measure, but it’s never been harder,” he says.

And signals travel through a number of transport streams that the broadcaster has little or no control over.

Because of this, Qligent CEO Brick Eksten says broadcasters are focusing on end-to-end QOS monitoring, which means watching the middle mile and not just the broadcast of the signal and the receipt of the signal at the device.

Qligent’s Vision showing bitrate analytics.

“Today, they care about the middle mile,” he says. In short, he adds, if the broadcaster is not watching all those pieces, it’s impossible to know what level of service exists at any given time.

More Flexible Tools

As the monitoring became more complex, the monitoring tools had to evolve.

Anupama Anantharaman, VP of product management at Interra Systems, says there has been a shift from manual monitoring on large video walls with humans “watching screens and looking for anomalies. That’s being replaced. People want a flexible way to monitor.”

The answer, she says, is alert-based monitoring, which flags only problems that need attention and helps speed up the troubleshooting process.

“Customers want help with root cause analysis,” she says. “That’s very, very important.”

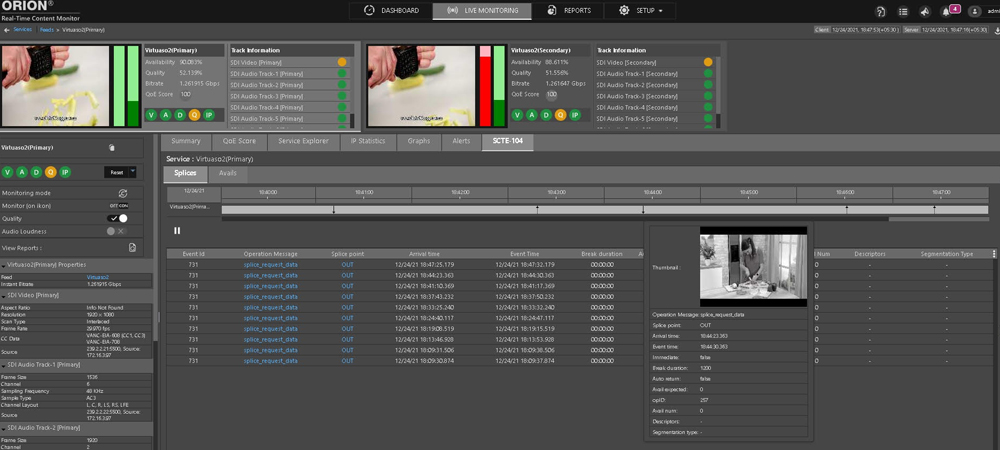

Interra’s Orion Central Manager traces the quality of the stream throughout the delivery chain, sending up alerts when issues are present, and the Orion 2110 probe, launched in 2022, monitors IP signals.

“That’s a fairly in-depth monitoring probe,” Anantharaman says.

Interra Systems’ Orion Real-Time Content Monitor.

Joel Daly, VP of product management at Telestream, says the company’s Argus platform monitors live delivery of video as well as video on demand. It flags any problems, and the aggregated information pushes to an interface to facilitate troubleshooting, he says. The aggregator integrates “thousands of streams, end to end” into a single dashboard, he says.

“If there is a problem, what is it? Where is it? Out of those thousand points, tell me. It reduces the time it takes to troubleshoot,” Daly says. “I know in real time what’s going on and understand the good and bad spots in my network to optimize everything.”

Tedial CTO Julián Fernández-Campón says the company’s smartWork NoCode Media Integration Platform is flexible, which allows customers to add and integrate new services or tools quickly for validating OTT and OTA content is of the proper quality.

“It puts our customer in control. They can integrate the tools which are part of the ecosystem,” he says. The flexibility means the customers are able to select the tools that are most suited what the broadcaster wants to monitor, he adds.

Ralph Bachofen, VP of sales and marketing at Triveni, says broadcasters are starting to encrypt certain programs within their streams.

Triveni’s decryption solution, which the company will highlight at this year’s NAB Show, gives the broadcaster “a full view of what’s going on in that program, even if it’s encrypted,” he says. “From a QOS perspective, you need to be able to decrypt.”

And for broadcasters who are sending out ATSC 3.0 signals, monitoring is “more complicated because the standard is more complicated,” he says.

Triveni Digital’s StreamScope XM ATSC 3.0 Monitor audits and logs quality of service for NextGen TV.

The company’s StreamScope ATSC 3.0 product not only analyzes the stream, he says, but it helps broadcast engineering staff “who many not know the technology as well as they know the older technology” learn ATSC 3.0 technology.

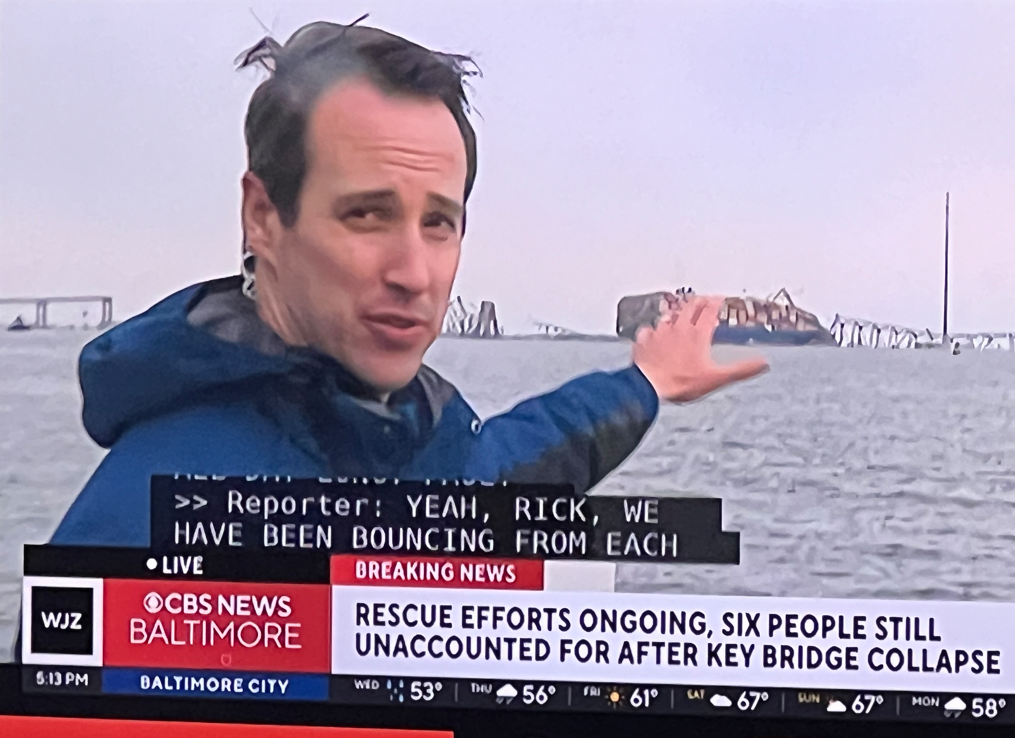

Ken Rubin, SVP at Actus Digital, says knowing just where a problem happened is vital to being able to quickly resolve it.

Actus Synchro “shows the probe points and shows exactly where the issue happened so they can immediately resolve it,” he says.

The company’s monitoring platform can also monitor whether downstream ads were placed correctly. The ability to cross-reference SCTE triggers with issues when an ad was shown lets broadcasters know if they need to do a make good to the customer, he says.

“They can proactively work on that, and not wait for them to complain,” Rubin says.

Joe Uberty, senior sales director at Vela, says the company’s Encompass monitor solution has the ability to monitor SCTE triggers, which helps broadcasters get additional revenue from the same content. But the ad content needs to be monitored as well to ensure the ads “follow the same value set the content owner may have,” he says.

Monitoring also ensures repurposed content played out by third parties such as Samsung TV do not conflict with the original rules for which ads could be played, he adds.

QOE As Revenue Generator

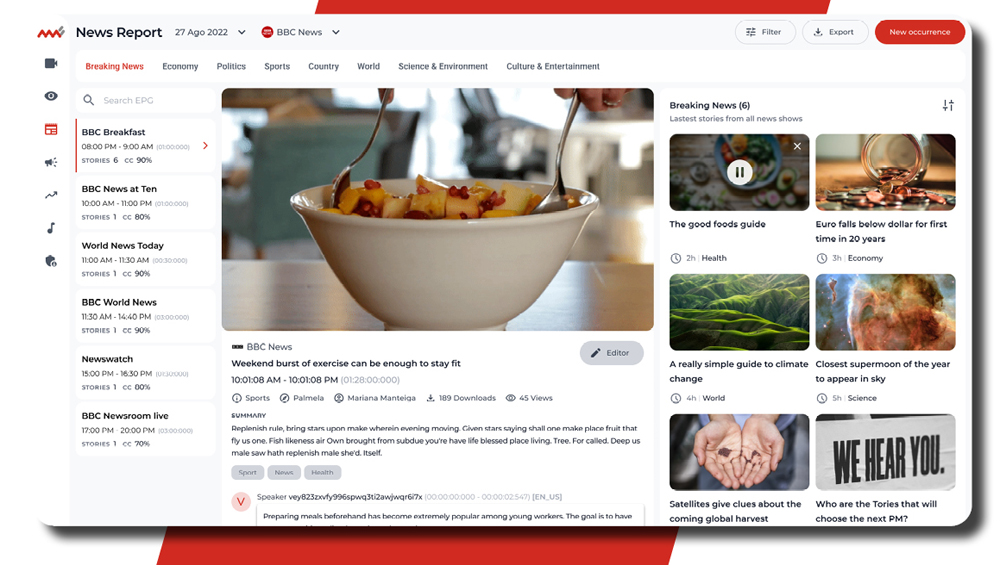

Voice Interaction CEO João P. Neto says one of the ways his company is working to help broadcasters bring in more revenue through QOE is through content personalization to maximize viewing time.

“The idea is to increase the quality of experience to do the matching between the knowledge and user profile as best as possible,” he says.

The news report dashboard of Voice Interaction’s MMS 7.0, with automatic segmentation and summary, topic and keyword detection, and related content.

Personalizing content requires access to analytics and gathering of metadata, Neto says. Voice Interaction is working with broadcasters to create different kinds of user groups and then recommend customized and intelligent content. The ability to personalize content draws on data available through compliance monitoring, he adds.

Yoann Hinard, Witbe COO, says the company’s customers use Witbe to remotely control physical devices. “It’s important to have real, physical devices that they can access remotely.”

Planche says the company has completely redesigned its Witbox product. “We used COVID to reinvent our hardware, make them much more scalable, make them easy to deploy,” he says.

The robots provide performance data about the service, such as loading time, whether an ad interrupted content and loudness levels for ads within content, he says.

TAG’s Briscoe says QOS is “expected and normal.” But what’s important, he says, is testing that the quality of video and audio meets human expectations. That means knowing at what level of quality a human can detect a problem with the image or sound, he says.

“The picture looks good to the eye, the sound is pleasant to the ear,” that the image is clear and that the colors are right, he says. “It’s about the human experience, not the technical experience. We’re now measuring for humans, and this is exciting.”

The TAG monitoring platform is 100% software based.

Qligent’s Eksten says that the more complex the systems become, the more important automation becomes.

“I believe over the next few years we’re going to see a move even from QOS to become more audit-centric, meaning we’ll pay less attention to fluctuations in QOS,” Eksten says. “In an imperfect world, there’s no such thing as perfect QOS. But where are there potential issues, how quickly can we locate them?”

Comments (0)